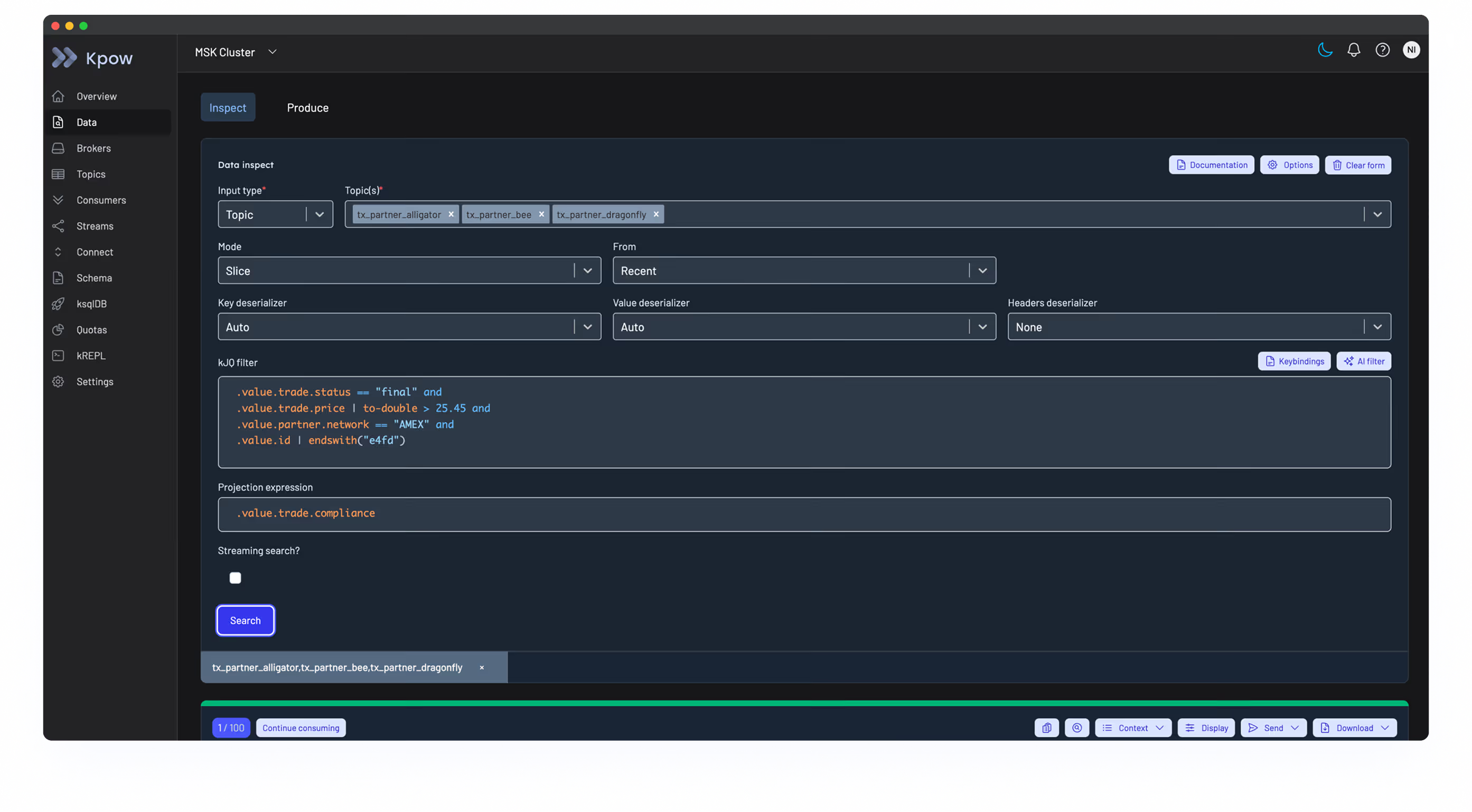

Govern Kafka at pipeline scale

Data engineers at Adidas, Mercadona, and HPE use Kpow to trace message flows, diagnose connector failures, and monitor consumer lag across Confluent, MSK, and self-hosted clusters.

One UI and API for any flavour of Kafka

Kpow connects to any Apache Kafka compatible platform, including MSK, Confluent, Redpanda, Aiven, Instaclustr, and self-hosted clusters.

Built for logistics platforms that can't afford blind spots

Most Kafka tools give you either developer productivity or operational visibility. Kpow does both. Multi-cluster monitoring gives your ops team a single view of the entire streaming estate, consumer lag trending catches silent failures before they cascade, and Connect diagnostics surface task-level errors in seconds.

Running MSK for some workloads and Confluent for others means two dashboards, two metric formats, and no way to trace a message flow end to end. Kpow connects to every cluster in your estate and gives you one interface to inspect topics, consumer groups, brokers, and schemas across all of them.

A stalled consumer partition means stale inventory data, missed delivery windows, and orders placed against stock that no longer exists. Kpow tracks consumer group lag over time so you can see exactly when lag started, which partitions are affected, and whether members dropped from the group.

As Kafka grows from an engineering tool to critical supply chain infrastructure, it needs the same access controls as your databases. Kpow provides RBAC with SAML and OAuth 2.0 integration, data masking for sensitive fields, and audit logs that record every action with user attribution and timestamps.

A connector that reports "RUNNING" while one of three tasks has silently failed is a common Kafka Connect failure mode. Kpow surfaces task-level status, stack traces, and error context directly in the UI. No more searching across container logs to find which task failed and why.

How a national food distributor eliminated Kafka blind spots across 12+ clusters

Challenge

A national food distributor had invested four years building their Kafka platform across multiple managed providers. Their home-grown tooling could not scale. When issues arose, the engineering team was manually combing through Connect log files, stitching together dashboards from different providers, and diagnosing consumer group lag with CLI snapshots that showed point-in-time state but no trends.

Solution

Kpow unified their Kafka operations with:

- A single instance managing 12+ clusters across providers

- Consumer group monitoring that immediately surfaced a five-minute gap in consumer activity, revealing a silent API failure

- Connect task-level diagnostics that reduced connector troubleshooting from hours to minutes

Result

- Single pane of glass across 12+ heterogeneous Kafka clusters

- Connect errors resolved in minutes, down from hours of log searching

- Proactive consumer group monitoring preventing customer-facing outages

- Offset-level data inspection that caught a production data flow issue before it reached end users

Learn from teams running Kafka at scale

See how distributors and logistics platforms architect Kafka for supply chain reliability, operational visibility, and data governance without sacrificing engineering velocity.

How NORD/LB transformed Kafka operations and cut debugging time in half

A German financial institution overcomes critical compliance gaps and operational inefficiencies to build a scalable, enterprise-grade streaming data platform.

Customer Obsession Driving Innovation with Kafka at Pickles

Innovation and evolution are central to the Pickles delivery model. Find out how Kafka and Kpow helps the Pickles team deliver.

Kpow Delivers Big Gains for Fintech Giant Pepperstone

Melbourne is the beating heart of Australia's quietly surging fintech industry and for almost a decade, local tech-enabled trading company Pepperstone has been carving its own niche, developing a burgeoning startup into one of the largest Forex and CFD brokers in the world. The company's...

Launch Kpow in your environment in under 10 minutes

Connect to any Kafka cluster in minutes. Deploy via Docker, Helm, or JAR. Full access for 30 days.

%202.svg)