Accelerating incident response: advanced filters, streaming search, and AI-powered queries

Table of contents

Overview

In the first part of this series, we explored how establishing foundational data inspection allows teams to turn raw, unparsed data dumps into readable, shaped payloads. However, achieving visibility is only the first half of the equation. When a production incident occurs, engineering teams must optimize for speed.

Finding a rare anomaly across millions of Kafka messages requires high-velocity search capabilities. This article explores the root causes of friction during critical investigations and demonstrates how Kpow combines server-side filtering, artificial intelligence, and continuous scanning to accelerate incident response.

This is Part 2 of the Kafka Data Management with Kpow: Unlocking Engineering Productivity series. You can read the full strategy in the main series article and access the associated posts as they become available:

- Part 1: Foundational Kafka Data Inspection: Shaping Payloads and Optimizing Visibility

- Part 2: Accelerating Incident Response: Advanced Filters, Streaming Search, and AI-Powered Queries (This article)

- Part 3: Triage, Repair, and Replay: Integrated Kafka Remediation Workflows

- Part 4: Defense in Depth: Unifying RBAC and Data Policies for Transparent Governance

About Factor House

Factor House is a leader in real-time data tooling, empowering engineers with innovative solutions for Apache Kafka® and Apache Flink®.

Our flagship product, Kpow for Apache Kafka, is the market-leading enterprise solution for Kafka management and monitoring.

Start your free 30-day trial or explore our live multi-cluster demo environment to see Kpow in action.

Problem: Friction in Incident Response

Application teams frequently face a Velocity Gap during production outages. High-volume topics process thousands of events per second. Locating a specific, rare failure requires sifting through millions of messages. To find these precise events, developers must construct complex search logic to match timestamps, evaluate nested array values, or isolate specific error codes.

Writing this logic introduces a steep syntax learning curve. Figuring out the exact code to filter deeply nested data takes time, which directly delays incident response. When engineers are forced to focus on how to query the data rather than analyzing the data itself, resolution times increase dramatically.

Limitations of Manual Search Workflows

Standard operational workflows often fail under the pressure of an active incident. Engineers using basic consumer scripts are forced to manually advance offsets, repeatedly executing commands and hoping to catch the right event before consumer timeouts occur.

This trial-and-error approach scales poorly. During an outage, developers struggle with basic JSON parsers that cannot handle complex type conversions or temporal math. To bypass the learning curve, teams rely on sharing massive text files of old, brittle query commands. Relying on static cheat sheets rather than dynamic search tools adds severe friction to the resolution process.

Advanced Filtering, AI, and Streaming Search in Kpow

Kpow eliminates the friction of manual polling and syntax struggles by providing a high-velocity search engine. By integrating advanced kJQ filtering, artificial intelligence, and automated query progression, Kpow transforms incident response into a frictionless workflow.

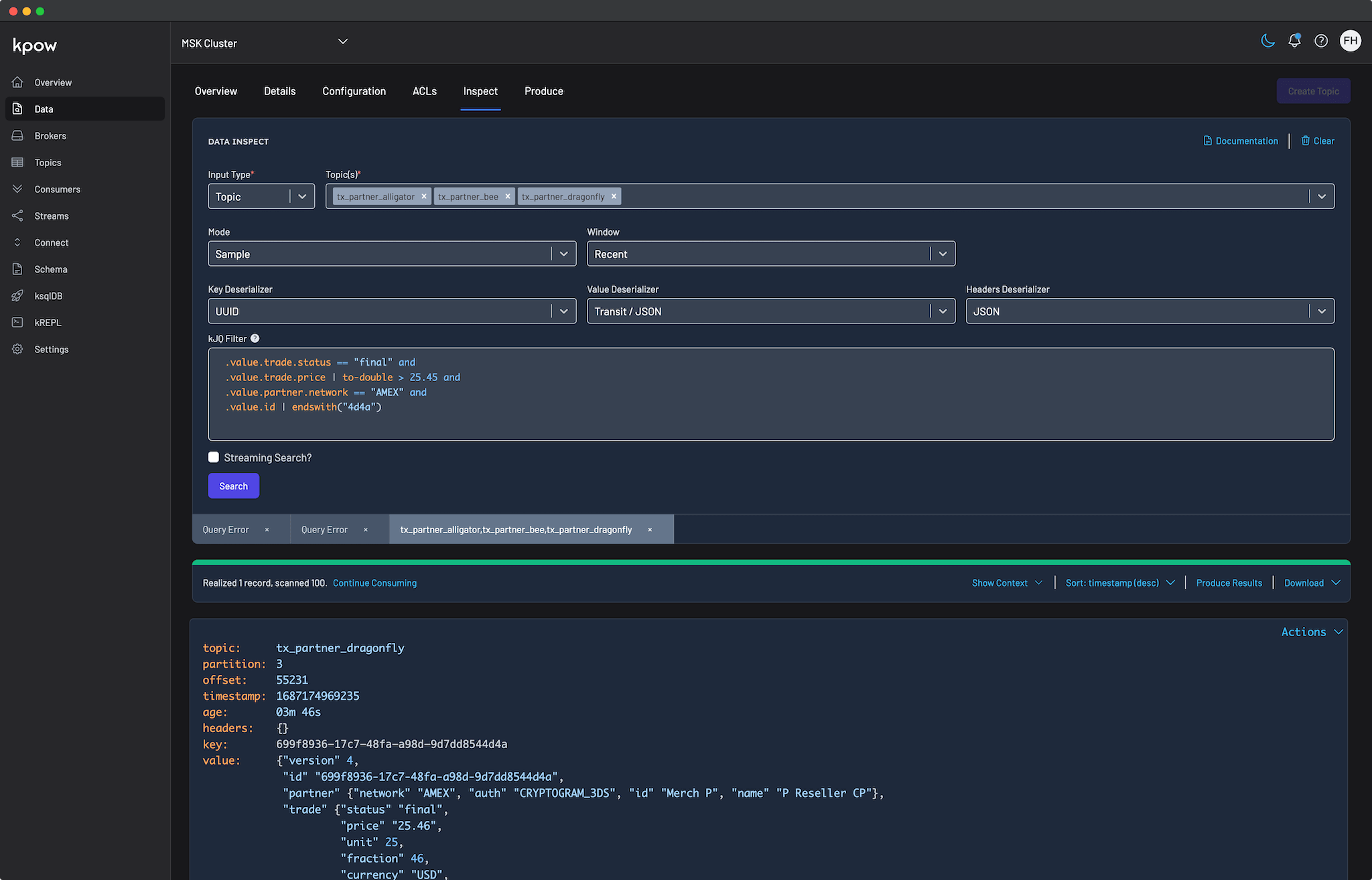

Precision with Advanced kJQ Filtering

To isolate highly specific events, Kpow provides advanced kJQ filtering. This JQ-like language executes directly on the server, allowing Kpow to rapidly slice through data before it ever reaches the browser. kJQ natively supports complex logical operators, type casting, and precise ISO 8601 duration mathematics.

For example, an engineer investigating un-audited, high-value provisional trades over the last two hours can execute the following advanced filter:

.value.partner.network == "VISA" and .value.trade.status == "provisional"

and .value.trade.compliance.audit == false and .value.trade.price | to-double > 40

and .timestamp > now - pt120mThis single query isolates specific nested string values, evaluates a boolean field (compliance.audit), casts a string-based price to a double for mathematical comparison, and applies a strict 120-minute temporal window using record metadata.

Eliminating the Syntax Barrier with AI

While advanced kJQ is powerful, writing complex syntax during a high-pressure outage can be daunting. To make this functionality instantly accessible to all engineers, Kpow integrates Bring Your Own AI (BYO AI) capabilities supporting AWS Bedrock, OpenAI, Anthropic, and Ollama.

Operators can bypass the syntax learning curve entirely by using natural language prompts. If an engineer needs to find specific transactions, they simply type their request into the AI prompt interface:

Show me all trades where partner network is VISAKpow's AI integration instantly translates this natural language request into a schema-aware, syntactically validated kJQ filter. This allows developers to interrogate complex data structures effortlessly.

Continuous Discovery with Streaming Search

Finding a rare event often means searching beyond the initial batch of results. Instead of forcing users to manually click to continue polling, Kpow offers Streaming Search.

By enabling the Streaming Search option, Kpow automatically and continuously progresses the query. The search runs persistently until the defined result limits are reached or the topic partitions are completely exhausted. This replaces manual polling with automated discovery, allowing engineers to define their search criteria via natural language or kJQ while Kpow handles the continuous data scanning.

Conclusion

In modern streaming architectures, minimizing the time to resolution requires highly automated, intelligent search capabilities. By combining advanced server-side data slicing with continuous streaming search, Kpow eliminates the need for manual offset tracking. Furthermore, by integrating AI models, Kpow removes the syntax learning curve, allowing any developer to instantly generate complex filters using natural language.

Once the problematic event is isolated, teams must take action to correct the pipeline. In the next part of this series, Triage, Repair, and Replay: Integrated Kafka Remediation Workflows, we will explore how Kpow enables teams to safely manage consumer offsets, re-inject dead-letter queue messages, and repair data payloads directly from the UI.

Next steps

Explore Kpow in your own environment with a free 30-day trial.

If you need assistance managing your Kafka environment, reach out to our engineering support team at support@factorhouse.io.