Kafka scaling best practices: An in-depth primer

Table of contents

Kafka is designed to scale, but it does not scale automatically. The decisions you make at the beginning, around partition counts, replication, consumer group design, and broker sizing, determine whether your cluster grows cleanly or accumulates operational debt that becomes expensive to unwind later.

This article pulls from published engineering work at LinkedIn, Uber, Pinterest, Cloudflare, Shopify, Wix, and Robinhood, alongside Apache KIPs and Confluent's production documentation, to give you a grounded view of what scaling Kafka actually looks like in practice.

How to scale a Kafka cluster

Scaling a Kafka cluster means increasing its capacity across four separate dimensions: brokers, partitions, consumers, and storage. Each one is independent, and each has its own ceiling and operational cost.

Adding brokers increases raw throughput capacity, but by itself does nothing for existing topics. Kafka does not automatically rebalance partitions to new brokers. Only newly created topics land on a new broker; for everything already running, you need to trigger a reassignment explicitly, either via a manual kafka-reassign-partitions run or through a tool like Cruise Control. LinkedIn built Cruise Control specifically because manually managing partition distribution across a cluster of 4,000 brokers is not practical. Cruise Control treats broker load, disk utilisation, rack distribution, and leader balance as optimisation goals rather than manual inputs.

Increasing partition counts is the primary lever for producer and consumer throughput on a given topic, because partitions are the unit of parallelism. The standard formula is max(t/p, t/c), where t is target throughput, p is per-partition produce throughput, and c is per-partition consume throughput, measured on your hardware. The caveat is that for keyed topics you cannot increase partition count on a live topic without breaking key-to-partition routing, so the decision needs to be made before traffic arrives.

Scaling consumers is bounded by partition count within a consumer group. You can add consumer instances freely, but any instance in excess of the partition count sits idle. For slow-consumer workloads or when partition count cannot increase, the options are Confluent's Parallel Consumer for in-process parallelism, or a consumer proxy architecture. Uber's uForwarder, Wix's gRPC fan-out proxy, and Robinhood's Kafkaproxy sidecar each converge on the same pattern: consume once per topic, fan out to downstream consumers, and decouple throughput scaling from partition counts.

Scaling storage has historically meant scaling brokers proportionally. Tiered storage, now GA in Kafka 3.9 via KIP-405, changes this by offloading cold log segments to object storage. Uber has been running tiered storage in production for several years and describes faster broker recovery as one of the main operational wins: a new or replaced broker no longer needs to re-replicate terabytes of cold data to become current.

Each of these levers interacts with the others. What follows covers the specific configuration decisions, documented trade-offs, and patterns from production deployments that determine whether scaling each dimension is clean or operationally expensive.

Understanding the scale ceiling first

Before making configuration decisions, it helps to calibrate against what production Kafka clusters actually handle. LinkedIn operates more than 100 clusters with 4,000 brokers and roughly 7 million partitions, processing over 7 trillion messages per day. Pinterest pushes more than 800 billion messages per day at 15 million messages per second peak, across more than 2,000 brokers in AWS. Shopify peaked at 66 million messages per second during Black Friday and Cyber Monday.

These numbers are useful not because your cluster needs to match them, but because the teams that built them have documented what broke along the way.

Partitioning strategy

How many partitions to create

The canonical sizing formula, from Jun Rao's Confluent post, remains the standard starting point: measure single-partition producer throughput (p) and single-partition consumer throughput (c) on your hardware, then calculate the required partition count as max(t/p, t/c) for your target throughput t. Add headroom beyond that, because consumer parallelism is bounded by partition count.

For ZooKeeper-backed clusters, Confluent's documentation recommends staying below 4,000 partitions per broker and 200,000 per cluster. Beyond these thresholds, controller failover slows noticeably because the controller propagates LeaderAndIsr information serially. KRaft removes this bottleneck. Confluent's lab benchmarks on a 2-million-partition cluster show drastically faster controlled-shutdown and uncontrolled-failure recovery compared to the ZooKeeper equivalent.

Even on KRaft, partition count is still bounded by per-broker file-descriptor limits, replica-fetcher threads, and metadata RAM. These are practical ceilings, not architectural ones.

Keyed topics and the repartitioning problem

For keyed topics, over-provision at creation time. Once a topic is in production, you cannot increase partition count without breaking the hash(key) % num_partitions mapping that routes messages to partitions. Confluent's documentation is explicit about this. If you anticipate growth, it is considerably easier to start with more partitions than you need today than to deal with the downstream consequences of repartitioning a live topic.

There are also cases where the standard rule breaks down in the other direction. Netflix's Real-Time Distributed Graph uses a separate Kafka topic per node and edge type so each can be tuned and scaled independently. The New York Times stores every article since 1851 in a single infinite-retention partition, accepting the per-topic throughput ceiling because publication rate is low. These are deliberate trade-offs, not mistakes.

Avoiding partition skew

Key distribution is the most common cause of hot partitions. LinkedIn's answer to this was Cruise Control, a goal-based rebalancer that considers rack awareness, capacity, leader balance, and disk utilisation in its optimisation proposals. It is now the de facto partition rebalancer in the open ecosystem and ships with AWS MSK, Strimzi, and Canonical's Charmed Kafka.

When adding brokers, be aware that Kafka does not automatically move existing partitions to new brokers. Only newly created partitions land on a new broker. You need to run Cruise Control or trigger a reassignment explicitly. When doing so, throttle the reassignment using --throttle <bytes/sec> to avoid saturating broker network and causing ISR shrinkage.

Producer configuration

Compression

Compression is one of the higher-leverage tuning options available on the producer side. The available codecs are none, gzip, snappy, lz4, and zstd. Confluent's general recommendation is lz4 for performance and zstd when you want better compression ratios without the CPU cost of gzip.

Cloudflare published concrete benchmarks on their real rrdns.recordbatch workload: Snappy achieved around 43% of original size at 446 MB/s compression throughput, while LZ4 reached 40% of original size at 594 MB/s compression and 2,428 MB/s decompression. Zstandard at level 1 achieved 24% of original size at 409 MB/s compression. Cloudflare ultimately standardised on Snappy due to Go client compatibility issues with LZ4 at the time. Their post also found that combining Snappy with 1-second batching produced an 8x size reduction on a low-volume topic.

One point worth noting: if your producer and topic compression codecs do not match, the broker is forced to decompress and recompress each batch, which burns CPU. Keep them aligned.

Batching and linger.ms

In Apache Kafka 4.0, the default linger.ms was changed from 0 to 5 milliseconds. The upstream rationale is that in production the throughput and compression gains nearly always outweigh the additional latency. This is now the default rather than a tuning trick.

Robinhood's migration to WarpStream for logging workloads illustrates the cost trade-off at the extreme end. By pushing batch sizes up significantly and accepting an increase in average produce latency from 0.2 seconds to 0.45 seconds, they achieved a 45% net cost reduction. That trade-off may not make sense for your workloads, but it does demonstrate how sensitive cost can be to batch sizing when storage is S3.

The counter-example is Shopify's BFCM 2025 scale testing, which found that partition increases, not larger batches, were the lever needed to maintain data freshness during traffic spikes. Producer batching and partition count address different constraints.

Consumer group scaling

The partition-to-consumer ceiling

Within a consumer group, at most one consumer instance can be assigned to a partition. Excess consumers sit idle. This creates a hard ceiling: maximum parallelism equals the topic's partition count. If you cannot or do not want to add partitions to a live keyed topic, you have a few options.

Confluent's Parallel Consumer (open-source, Apache 2.0) lets a single consumer thread pool process messages from one partition in parallel, with three ordering modes: KEY, UNORDERED, and PARTITION. It is specifically aimed at slow-consumer workloads involving database calls or HTTP requests. The trade-off is that exactly-once semantics are more complex to manage.

At larger scale, a consumer proxy becomes the convergent answer. Uber's uForwarder, Wix's push-based gRPC fan-out proxy, and Robinhood's Kafkaproxy sidecar all address the same fundamental problem: the partition count ceiling, head-of-line blocking, and fragmented multi-language client behaviour. Wix found they were consuming each produced byte roughly four times across different consumer groups and built a proxy that consumes each topic once and fans out via gRPC, reducing their Kafka bill by 30%.

Rebalancing

The legacy eager rebalancing protocol revokes all partitions from all consumers on every rebalance event. For large consumer groups, this can pause consumption for seconds to minutes.

KIP-429, introduced in Kafka 2.4 and the default since Kafka 3.0, provides incremental cooperative rebalancing via CooperativeStickyAssignor. Only the partitions that need to move are revoked during a rebalance; all others continue processing. In environments running Kafka 3.0 or later, there is little reason to stay on the eager assignor.

For Kubernetes deployments specifically, static membership (group.instance.id, KIP-345) is the other essential lever. A consumer that restarts with the same instance ID does not trigger a rebalance, provided it returns within session.timeout.ms. This matters in practice because autoscaling events that add or remove consumers can otherwise cause repeated rebalances that destabilise consumption, which is exactly the problem Robinhood ran into before building their proxy.

Monitoring consumer lag

LinkedIn's Burrow remains one of the better approaches for lag monitoring. Rather than relying on static thresholds, it consumes the __consumer_offsets topic, tracks a sliding window per partition, and issues status judgements based on whether consumer offsets are advancing relative to broker offsets. This makes it far more reliable for wildcard consumers and MirrorMaker than threshold-based alerting.

Cloudflare's pattern is also worth noting: they auto-create a high-lag alert for every topic at topic-creation time, using time-based lag rather than offset-based lag. Time-based lag measures the difference between when the last committed offset was produced and when it was consumed, which is more meaningful for SLOs than raw offset numbers.

Broker infrastructure

Sizing

Kafka brokers are not typically CPU-bound unless TLS, compression, or a high partition count is involved. Pinterest found that enabling TLS at scale changed the per-connection cost significantly enough to require raising their broker heap from 4 GB to 8 GB.

Memory allocation should prioritise the OS page cache over the JVM heap. Kafka relies heavily on the page cache for storing and serving messages. The standard recommendation is 4 to 8 GB of heap, with the rest of available RAM left for the page cache. Never co-locate Kafka with other memory-hungry processes.

For GC configuration, the LinkedIn and Confluent baseline uses G1GC with Xms and Xmx set to the same value to avoid heap-resize jitter, a MaxGCPauseMillis of 20, and InitiatingHeapOccupancyPercent of 35. Netflix switched to Generational ZGC on Java 21 for sub-millisecond pauses on large-heap brokers. That is worth considering if you have a clear reason to run heaps larger than 16 GB, but G1GC remains the sensible baseline.

At the OS level: set nofile to 100,000 or more, configure vm.swappiness=1 to prevent the kernel from swapping out page-cached log data, raise network buffers, and mount storage with noatime,nodiratime on XFS or ext4.

Rack-aware replication

Configure broker.rack to your AZ identifier so that replicas are distributed across zones with RF=3. Then enable follower fetching via replica.selector.class=org.apache.kafka.common.replica.RackAwareReplicaSelector and set client.rack on consumers. This allows consumers to prefer same-AZ replicas and can significantly reduce cross-AZ network costs, which are frequently the dominant cost line in cloud Kafka deployments.

KIP-881, available since Kafka 3.4, extends this further by allowing RangeAssignor and CooperativeStickyAssignor to use rack data when assigning partitions to consumers.

Tiered storage

Tiered storage separates compute and storage by offloading older log segments to object stores such as S3, GCS, or Azure Blob. KIP-405 reached GA in Kafka 3.9 (November 2024). Uber has been running tiered storage in production for several years and reports that the key wins are independent compute/storage scaling and faster broker recovery, since a new broker no longer needs to re-replicate terabytes of cold data on joining the cluster.

If you are evaluating greenfield analytics-style Kafka workloads at scale, tiered storage is worth considering up front rather than retrofitting later. The cost gravity in the ecosystem is moving toward S3-backed storage, and the options for doing so are broader than they were two years ago.

ZooKeeper to KRaft migration

This is not a future consideration. ZooKeeper mode was deprecated in Kafka 3.5 and removed in Kafka 4.0. Support for ZooKeeper-based clusters ends roughly November 2025. Confluent Platform 8.0 removed ZooKeeper. If you are running any ZooKeeper-backed Kafka cluster, migration planning should already be underway.

KRaft became production-ready in Apache Kafka 3.3 and reached full feature parity with ZooKeeper mode in Kafka 3.9. The migration path involves provisioning a dedicated KRaft controller quorum, running through a dual-write phase where metadata is written to both ZooKeeper and KRaft, then rolling brokers to KRaft mode one at a time before decommissioning the ZooKeeper ensemble.

A few things to be aware of before starting: there is no downgrade path once migration is finalised. SASL/SCRAM for controllers is not supported during migration. Brokers in dual-write mode use additional CPU and memory. And combined controller/broker mode is not supported for production migration targets.

Confluent Cloud completed the largest known migration, moving thousands of clusters to KRaft without breaching SLAs. One widely cited outcome from a 50-node financial services cluster: controller failover time dropped from 5 to 7 seconds to under 1 second.

How Kpow can help

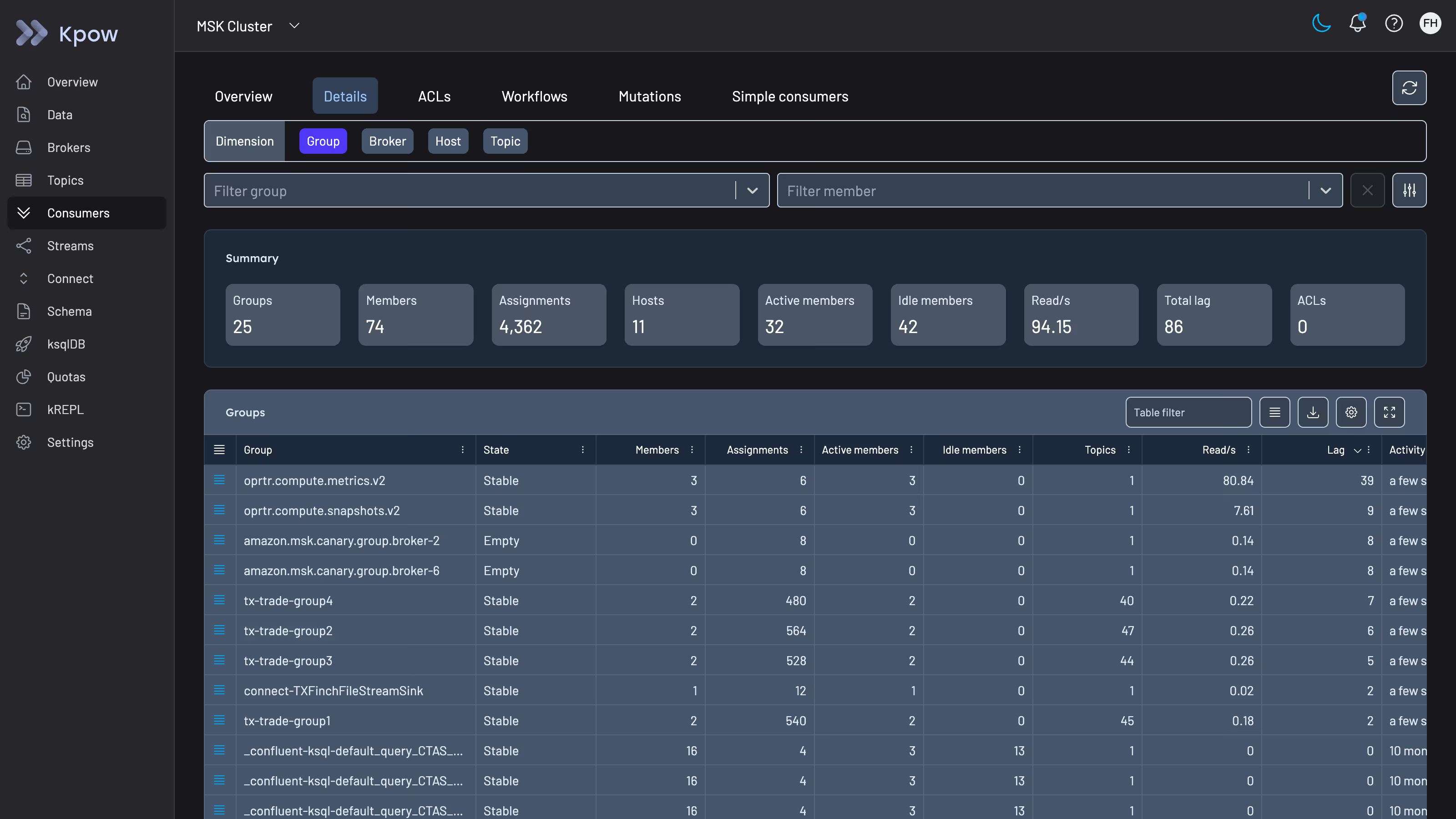

Scaling a Kafka cluster involves a lot of moving parts, and visibility is frequently the gap. When you are troubleshooting a partition skew problem, investigating consumer lag across dozens of groups, or working through the state of a reassignment, having clear access to your cluster's internal state saves significant time.

Kpow is a Kafka management and observability tool built by Factor House. It gives you a live view of consumer group lag, partition assignment, topic configuration, and broker health in a single interface. If you are running multiple clusters, it supports those as a unified view as well. For teams working through the scaling changes described in this article, having that visibility during and after configuration changes reduces the feedback loop considerably.

You can connect Kpow to any Kafka cluster in minutes and try it free for 30 days, whether you are running on bare metal, Kubernetes, or a managed service.

Summary

The decisions that constrain Kafka at scale tend to compound. Partition counts that seemed reasonable at launch become structural limits. Consumer groups on legacy rebalancing protocols create operational instability under autoscaling. ZooKeeper clusters that were not migrated before support ended become a liability.

Most of the patterns that work at scale, cooperative rebalancing, rack-aware follower fetching, KRaft, Cruise Control for partition balance, and tiered storage for cost, are well-documented and available without commercial dependencies. The engineering blogs from LinkedIn, Uber, Cloudflare, and others are primary sources worth reading in full if you are operating at significant scale or heading toward it.