Kafka partition key best practices

Table of contents

The partition key is the most consequential design decision you make for a Kafka topic. It determines message ordering, consumer parallelism, log compaction behavior, and whether your cluster develops hot spots under load. Getting it wrong early is expensive to fix later.

This guide covers what partition keys actually do at the implementation level, common patterns and anti-patterns, and practical guidance on monitoring and migration. Tools like Kpow can help you observe partition behavior across your cluster, which is covered toward the end.

What partition keys are and what they do

A Kafka producer record is a (key, value, headers, timestamp) tuple. When the producer sends a record, the partitioner decides which partition it lands on. The default Java DefaultPartitioner follows this logic:

if (partition is explicitly specified) → use it

else if (keyBytes != null) → partition = murmur2(keyBytes) % numPartitions

else → sticky partitioner picks a partition until the batch fills

Murmur2 is a non-cryptographic 32-bit hash chosen for speed and distribution. The critical detail is the modulo operation: partition assignment is hash(key) % N, where N is the partition count. Any change to N reshuffles the entire mapping, which breaks ordering guarantees for existing keys. Every Kafka practitioner guide repeats this warning, and for good reason.

Null keys

Null-keyed records are handled differently depending on your Kafka version:

- Pre-Kafka 2.4: round-robin per record, producing even distribution but small, inefficient batches.

- Kafka 2.4+ (KIP-480): the

UniformStickyPartitionerpins to one partition until the batch fills, then rotates. Better batching, but the original implementation worsened skew when a broker was slow. - Kafka 3.3+ (KIP-794):

UniformStickyPartitioneris@Deprecated. The current recommendation is to remove the partitioner class configuration and setpartitioner.ignore.keys=trueinstead.

Null keys are appropriate when ordering is irrelevant and downstream consumers have no per-entity locality requirements, such as fire-and-forget log shipping. They are a problem when the topic is compacted, when consumers expect per-entity ordering, or when you anticipate adding stateful stream processing later.

Log compaction

With cleanup.policy=compact, Kafka's log-cleaner retains only the latest value per key per partition. This requires non-null keys. The broker exposes NoKeyCompactedTopicRecordsPerSec precisely so you can alert when null-keyed records land on a compacted topic. This metric should always be zero on compacted topics.

Compaction is also per-partition. If the same logical entity ends up under different keys due to a mutable field change, or on different partitions because partition count increased, compaction will not deduplicate across those entries. Compacted topics need immutable keys.

Kafka producer partition strategies

The table below covers the built-in options:

Custom partitioners give you precise control but come with real implementation complexity. The cross-language hashing problem described below is the canonical example of how easily a custom partitioning scheme can break. Confluent's guidance is to stick with the default unless you have a clear, measurable reason to change.

The cross-language hashing problem

librdkafka (the C library underlying Python, Go, and other non-JVM Kafka clients) defaults to CRC32, not murmur2. A Python producer and a Java producer writing to the same topic with the same logical key will land records on different partitions unless you explicitly set partitioner=murmur2_random in librdkafka.

This matters in mixed-language environments, and it affects Kafka Connect tasks and CDC connectors, which are typically JVM-based and will use murmur2. If you have multiple producer languages writing to the same topic, pin the partitioner explicitly in every client.

Kafka partition key best practices

Choose a high-cardinality, immutable identifier

The most important thing you can do is choose the right key before the topic goes into production. The decision frames are:

- What is the smallest entity that must stay ordered together? That entity's ID is your key.

- Is that field immutable? If it can change (email address, username, account status, IP address), do not use it as a key.

- Does it have enough distinct values? For even distribution, you generally need at least 20 times as many distinct key values as partitions. With fewer than that, the binomial variance is high and some partitions will get significantly more load than others.

System-generated identifiers (UUIDs, customer IDs, aggregate IDs, order IDs) satisfy all three criteria. User-editable fields almost never do.

Avoid mutable fields

Using a mutable field as a partition key creates two compounding problems.

First, ordering breaks. Events for the same logical entity before and after the field changes hash to different partitions. Any stateful consumer that expects per-entity ordering will process those events out of sequence, silently.

Second, log compaction breaks. A compacted topic keyed by email accumulates stale entries whenever users change their email address. The old key and the new key are distinct, so the log-cleaner cannot merge them. The compacted log grows indefinitely without correctly representing the latest state of each entity.

The well-established guidance from Confluent, Cloudurable, and others: key compacted topics by an immutable, system-generated entity ID, never by anything the application or user can mutate.

Watch key cardinality

Low-cardinality keys (region, country, plan tier, status enum) guarantee hot partitions. If you only have five regions and thirty partitions, at most five partitions will ever receive traffic.

High-cardinality keys can also cause hot partitions when the distribution is skewed. Tenant IDs where one enterprise customer generates orders of magnitude more traffic than others, or user IDs with celebrity users, follow a Zipf distribution. The math looks fine on average, but in practice one or two keys drive the majority of throughput.

Both cases require intervention, but through different mechanisms.

Handle hot partitions with composite keys or salting

Composite keys combine two fields: tenantId|entityId, region|customerId, shardKey|primaryKey. This widens the cardinality of the routing key while preserving ordering for the inner entity. Use composite keys when one field alone does not have enough cardinality, or when one field has a skewed distribution.

Key salting appends a random bucket suffix to a hot key: userId#<0..K-1>. This distributes a single hot entity across K partitions. The trade-off is that per-entity ordering is lost across those salt buckets, so consumers must be designed to merge by the un-salted prefix. Salting works well when downstream processing is associative and order-insensitive (counts, sums, deduplication to a database).

Time-window bucketing adds a time component: userId|2025-10-21T10:00. This is useful for bursty single keys where the burst is time-bounded.

Dedicated topics for celebrity keys are the most expensive option but fully isolate their load. Reserve this for cases where the other approaches are not viable.

New Relic's events pipeline is a documented example: the top 1.5% of query identifiers drove roughly 90% of events on their aggregation topic. Their fix was a composite key combining query ID with a time-window start time, spreading hot queries across partitions in time-bounded chunks.

Do not use UniformStickyPartitioner for keyed traffic

This warrants a specific call-out because it is a common mistake. UniformStickyPartitioner explicitly ignores partition keys. Records with the same key are not guaranteed to land on the same partition. The class has been @Deprecated since Kafka 3.3, but it appears in older documentation and can still be set explicitly. If you see UniformStickyPartitioner in your producer configuration and your topic has keyed traffic, remove it.

Over-provision partitions at topic creation

Adding partitions to a live topic with keyed traffic breaks the hash(key) % N mapping. Keys that previously routed to partition X will route to a different partition after the count changes. For stateful Kafka Streams applications, this is worse: state stores partition by the same hash, so an expansion also corrupts the state mapping.

Start with more partitions than you need today. It is much cheaper to over-provision upfront than to migrate later. The practical upper bound is around 4,000 partitions per broker on conservative deployments. Clusters with 100,000+ partitions have caused outages even under no traffic, because each partition represents file handles, metadata, and replica-fetcher threads.

Be careful with null keys on compacted topics

If your topic has cleanup.policy=compact, monitor NoKeyCompactedTopicRecordsPerSec. A value above zero means null-keyed records are reaching the topic and compaction is doing nothing for them. Producers sending null keys to a compacted topic are usually misconfigured. Alert on this metric; it should always be zero.

Standardise the hash function across all producers

If only one language produces to a topic, the default hash function is whatever that client uses. If multiple languages produce to the same topic and rely on consistent key-to-partition routing, you must pin the hash function explicitly. Set partitioner=murmur2_random in librdkafka-based clients so they match the JVM default. Verify CDC connectors and Kafka Connect tasks, which are JVM-based and use murmur2 regardless of other producer languages in your stack.

How to change your key strategy safely

Once a topic is in production, you cannot change the partition count or key field in place without breaking ordering. The documented migration pattern, used by teams at AppsFlyer and described in Confluent's documentation, is:

- Run a side consumer that reads the live topic and simulates the new key, computing what partition each record would land on. Collect distribution metrics to validate the new key before committing to it.

- Create a new topic with the new partition count and key schema.

- Dual-write via a feature flag. Producers emit to both the old and new topic simultaneously.

- Bring up new consumers reading from the new topic. Verify they produce the same outputs as the old consumers.

- Drain old consumers, cut traffic over, decommission the old topic.

- Communicate the migration plan to all downstream teams before flipping the flag. Partition keys are a cross-team contract. Changing them affects every service that reads from the topic, every stateful processor, and potentially every database that receives derived output.

After the cutover, re-tune linger.ms, batch.size, and buffer.memory. Different keys have different temporal clustering properties, and batching dynamics change in ways that are not always immediately obvious.

Monitoring partition key health

The metrics that matter most for key-related issues:

Operationally, alert when the max-to-average per-partition byte rate ratio exceeds 1.5 to 2.0. A ratio under 1.2 generally requires no action. A ratio above 2.0 is worth treating as urgent: investigate whether to apply a composite key, salting, or a dedicated topic for celebrity keys.

For topic-level partition counts referenced in the Kafka topic partition best practices guide, the same thresholds apply: per-broker partition counts approaching 3,500 warrant attention regardless of traffic levels.

How Kpow helps with partition key monitoring

Choosing a good partition key is a design-time decision, but validating and maintaining it is an operational one. Kpow provides the visibility that makes this practical at scale.

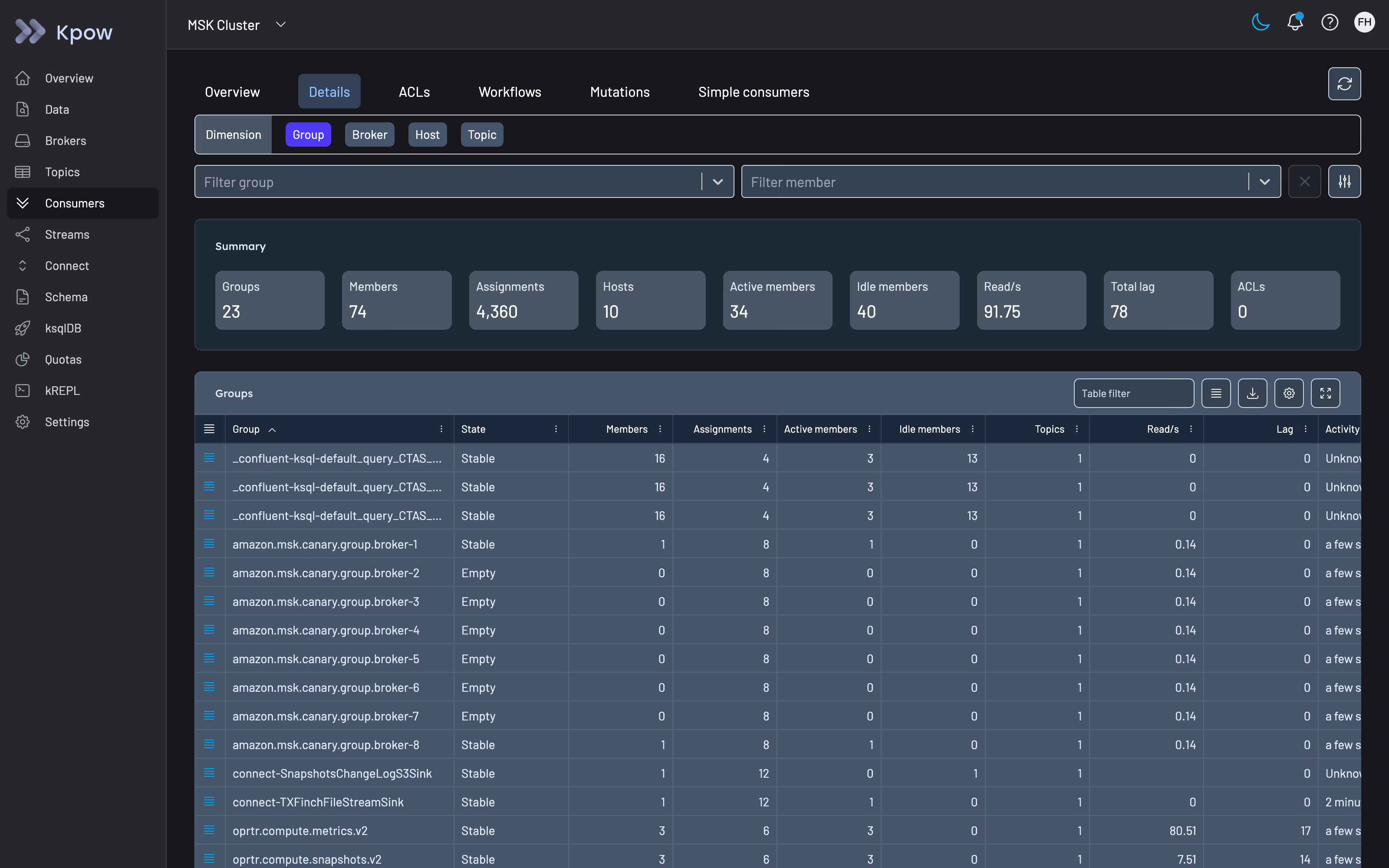

Within Kpow's consumer group views, you can inspect per-partition lag across all consumer groups on a topic, which is the most direct signal of key skew in production. When one or two partitions consistently lag behind the rest, the distribution analysis starts there. Kpow's multi-cluster support means you can compare partition health across environments in a single view rather than running kafka-consumer-groups.sh queries against each cluster separately.

For compacted topics, Kpow's topic inspection surfaces message-level metadata including keys, which makes it straightforward to identify producers sending null-keyed records to compacted topics before NoKeyCompactedTopicRecordsPerSec metrics are fully instrumented.

Kpow also supports broker-level health monitoring, so when a hot partition starts manifesting as ISR shrinks or under-replicated partitions, the signal is visible alongside the consumer-group lag data that points to the likely cause.

You can try Kpow for yourself with a free 30-day trial. It connects to any Kafka cluster and deploys via Docker, Helm, or JAR.

Summary

The decisions that matter most, in order of when you make them:

- Before topic creation: choose an immutable, high-cardinality, system-generated identifier. Estimate

distinct(key) / num_partitionsand target at least 20. Over-provision partitions; it is much cheaper than migrating later. - When building producers: standardise the hash function across all client languages. Verify CDC connectors explicitly. Do not use

UniformStickyPartitioneron keyed topics. - In production: monitor per-partition byte rate variance and consumer-group lag skew. Alert on max/avg ratio above 1.5. Alert on

NoKeyCompactedTopicRecordsPerSec > 0for compacted topics. - When skew appears: identify whether it is a data distribution problem or a broker placement problem. For data: composite keys first, then salting, then dedicated topics for intractable cases.

- When migration is necessary: new topic, dual-write, validation, cutover. Never change partition count on a live keyed topic in place.