Amazon Corretto 11 Memory Issues

Table of contents

This post explores memory issues that can be encountered when running a JVM process in a Corretto container.

Update: These changes have been backported to OpenJDK11 and will be released in Corretto-11 as part of the Q3 updates on July 19th.

A recent move to v2 cgroups by a number of Linux distributions (including Amazon Linux 2022 and Red Hat Enterprise Linux 9) highlights an issue in Amazon Corretto 11 where the JVM process can cause a Docker container to exit with OOMKilled errors. This issue applies to any Corretto 11 JVM process and is not specific to Kpow.

Corretto is the base image of our main Docker container. We expect the impact of this issue to be limited, and for it to be resolved in Corretto shortly.

This post provides an explanation of the issue and a temporary workaround to constrain the heap usage of Kpow when using our docker image.

What is Kpow?

Kpow provides enterprise-grade monitoring, management, and control of Apache Kafka Clusters, Schema Registries, ksqlDB, and Connect installations.

Packaged as a single JAR file or Docker container, our users deploy Kpow in numerous ways - on-premises, in private or public cloud, or a mixture of both.

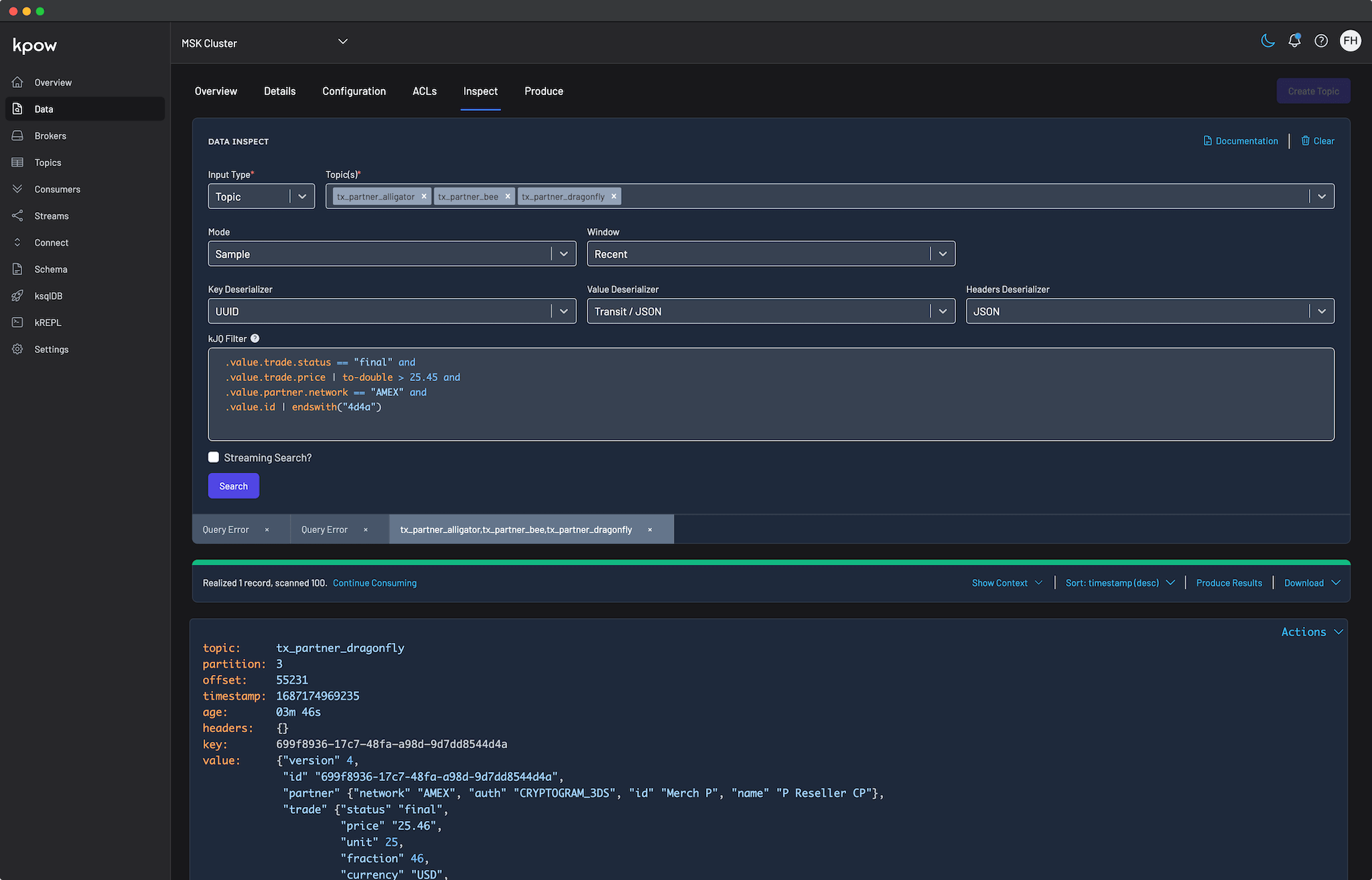

Kpow Data Inspect UI

JVM Memory Management

Kpow is built in Clojure, a language that runs on the JVM and in the browser.

The base deliverable for each Kpow release is a Java JAR. When using the JAR directly we start the JVM with memory constraints, e.g.

java -jar -Xms2G -Xmx2G ./kpow-latest.jarIn the example above Kpow is started with an exact allocation of 2GiB heap memory. If the JVM ran out of memory we would expect to see OutOfMemoryErrors written to the Kpow application logs as the system exited. This basically never happens.

Docker Memory Management

Every Kpow release is published to Docker Hub.

Common practice is to set resource limits on Docker containers as they are deployed. Support for detecting linux container resource limits was introduced in OpenJDK10 and later backported to OpenJDK8u191. Prior to that change the JVM assumed that CPU and memory available on the host machine was the same as that available to the container itself. Amazon Corretto 11 supports constraining Kpow's memory usage to 80% of the total memory available to the docker container with:

- -XX:InitialRAMPercentage=80

- -XX:MaxRAMPercentage=80

These flags are specified as overridable defaults in the Kpow Dockerfile:

ENV JVM_OPTS="-server -Dclojure.core.async.pool-size=$CORE_ASYNC_POOL_SIZE -XX:MaxInlineLevel=15 -Djava.awt.headless=true -XX:InitialRAMPercentage=80 -XX:MaxRAMPercentage=80"In practice this means the Kpow JVM process and Docker container limits are aligned, and we rarely receive reports of memory issues.

The Problem

The original implementation of container limit detection by the JVM was based on v1 cgroups, which at the time was the only variety available in Linux. Support for v2 cgroups container limit detection was introduced in OpenJDK15, has recently been backported to OpenJDK11u, and will available in the Q3 11.0.16 GA release.

Amazon Corretto is a downstream distribution of OpenJDK and doesn't yet support v2 cgroups container limit detection. Instead, behaviour reverts to the same as prior to JDK10 / JDK8u191 where the resources of the host machine are taken in place of container limits.

Deploying Kpow to Kubernetes where the nodes use v2 cgroups will result in the JVM assigning a maximum heap of 80% of the node itself, rather than any limit applied to the container. As Kpow runs, the JVM may decide to allocate memory greater than the container limit. At that point the Kubernetes scheduler will kill the Kpow container with an OOMKilled error.

This has only become an issue as major linux distributions move to v2 cgroups by default. Amazon Corretto 11 will pick up the backported cgroups v2 implementation in time, at which point this will cease to be an issue for Kpow deployment regardless of host machine Linux distribution.

A Quick Workaround

The Kpow Docker image accepts JVM_OPTS as an environment variable.

You can override the default JVM_OPTS and set explicit JVM memory constraints for Kpow like so:

JVM_OPTS=-server -Dclojure.core.async.pool-size=8 -XX:MaxInlineLevel=15 -Djava.awt.headless=true -Xms1638M -Xmx1638M

ENVIRONMENT_NAME=Trade Book (Staging)

BOOTSTRAP=kafka-1:19092,kafka-2:19093,kafka-3:19094

SECURITY_PROTOCOL=SASL_PLAINTEXT

...

...This has the downside of requiring you to configure both container limits and an environment variable with an explicit Xmx/Xms lesser than those limits, but is a practical solution to OOMKilled errors while we await the backporting of v2 cgroup detection to Amazon Corretto 11.

Long-Term Resolution

Support for v2 cgroup limit detection will be merged into Amazon Corretto 11 in the Q3 release on July 19th.

This issue prompted us to prioritize adoption of Amazon Corretto 17 as the base of our Docker image. OpenJDK17 supports v2 cgroups already, and we hope to avoid similar issues resolved in later version of the JDK awaiting backporting to earlier versions. JDK17 is the current LTS release of the JVM and as such we will provide a tagged release of that base shortly and move to it as the base image once we have tested thoroughly.

Issue Confirmation

We can inspect behaviour of the JVM process within Amazon Corretto and MaxRAMPercentage application like so:

docker run -m 1GB amazoncorretto:11.0.15 java \

-XX:MaxRAMPercentage=80 \

-XshowSettings:vm \

-version

VM settings:

Max. Heap Size (Estimated): 4.64G

Using VM: OpenJDK 64-Bit Server VM

openjdk version "11.0.15" 2022-04-19 LTS

OpenJDK Runtime Environment Corretto-11.0.15.9.1 (build 11.0.15+9-LTS)

OpenJDK 64-Bit Server VM Corretto-11.0.15.9.1 (build 11.0.15+9-LTS, mixed mode)Using Amazon Corretto 11 with a 1GB memory constraint on the Docker image and a MaxRAMPercentage of 80% we find the JVM has a 4.64GB max heap size.

docker run -m 1GB amazoncorretto:17.0.3 java \

-XX:MaxRAMPercentage=80 \

-XshowSettings:vm \

-version

VM settings:

Max. Heap Size (Estimated): 792.69M

Using VM: OpenJDK 64-Bit Server VM

openjdk version "17.0.3" 2022-04-19 LTS

OpenJDK Runtime Environment Corretto-17.0.3.6.1 (build 17.0.3+6-LTS)

OpenJDK 64-Bit Server VM Corretto-17.0.3.6.1 (build 17.0.3+6-LTS, mixed mode, sharing)Updating the image in use to Amazon Corretto 17 demonstrates correct detection of the container resource limits, with a max heap size of ~800MB.

If enterprise-grade Apache Kafka tooling with a focus on performance and reliability interests you, sign up for a free 30-day trial today.